14. May 2026

A builder’s view: What good AI looks like in HR software

By Alex Bannon, Engineering Manager at Personio

AI in HR carries a different weight than AI in most other categories. HR systems sit close to decisions that affect pay, opportunity, and people’s livelihoods. That raises the bar for what we build, how we test it, and which ideas we intentionally leave on the cutting-room floor.

I lead Personio’s AI, machine learning, and data efforts for customer-facing launches. When people ask me how Personio builds AI for HR, I point them to the work behind the scenes: data foundations, permission-aware context, clear boundaries, and explicit human checkpoints.

A good starting point is the problem you’re trying to solve in the first place. HR teams aren’t looking for novelty. They’re looking for fewer preventable errors, less repetitive admin, and more confidence in the numbers and decisions they need to stand behind.

That’s the lens we use at Personio, and it’s what we mean by purposeful AI.

Purposeful AI, in plain terms

Purposeful AI is not AI everywhere. It’s AI used where it can do three things well:

Handle messy inputs (free text, documents, ambiguous requests) and turn them into something structured and usable.

Remove repetitive work that blocks HR teams and managers from doing higher-value work.

Support judgment without replacing it, especially in sensitive people decisions.

That third point is where a lot of our product choices get made. In HR, speed is useful until it removes the moment where someone has to think, ask a follow-up question, and take responsibility for what happens next.

When we build AI, we optimise for outcomes where humans stay accountable. The goal is to reduce the time spent collecting information, checking consistency, and preparing a decision, so HR teams and managers can spend more time on the judgment that only people can make.

“It’s not ‘what should we do with AI next?’ It’s ‘what pain exists whether or not AI exists, and how do we fix it better and more efficiently?’ That’s how you avoid building things that fail or that people don’t actually use.“

Camille Merritt

Product Manager, Personio

1: AI should support judgment

A lot of skepticism about AI in HR comes down to one fear: that the system makes decisions inside a black box, and people are left to defend an outcome they did not truly choose.

Our approach is the opposite. We use AI to speed up the mundane work around data collection and preparation, then design explicit review points so a human stays responsible for the decision stage.

This shapes concrete product decisions. We focus on AI that helps people gather the right information, spot inconsistencies, and draft a starting point that a human can review, refine, and own.

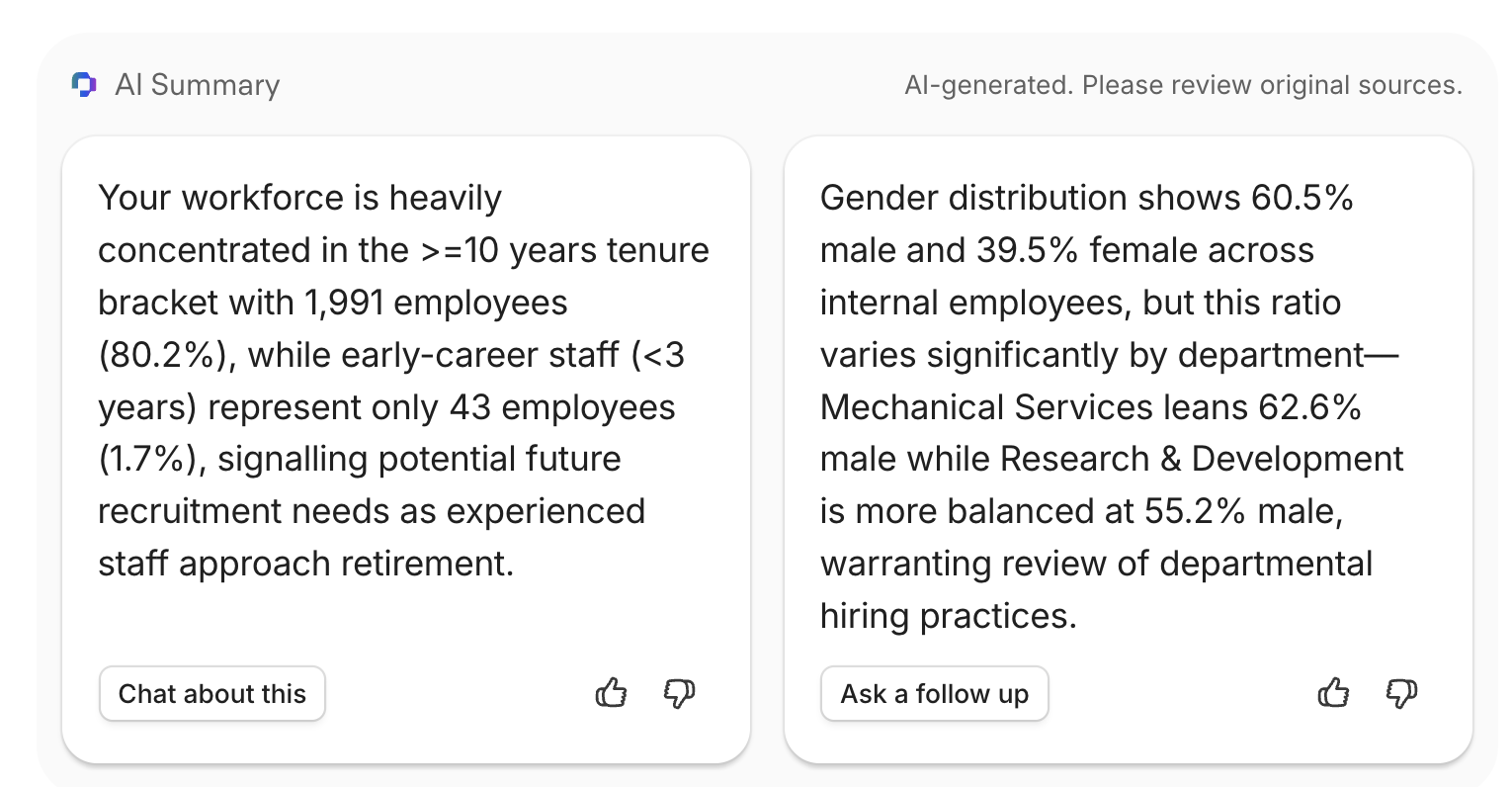

Example: Personio's People Analytics provides clear AI summaries of the data you already have. There’s no bias, action, or implicit suggestion in these: they simply summarise what you may have missed and leave it up to you to decide what to do next.

2: Connected context beats a chatbot on top

There’s been a rush across software to ship something that looks like AI. In HR tech, one of the most common patterns is a thin chatbot that only talks to one data source and regurgitates it in nicer prose.

But HR work is cross-functional by nature. Recruiting data, payroll data, time and absence tracking, tenure, attrition, and analytics all connect. AI becomes meaningfully more useful when it can draw from the right sources, with the right permissions, inside the system HR already relies on.

This is why we put so much emphasis on the system-of-record foundations around data quality, permissions, and consistent definitions. AI can only be as reliable as the context it is allowed to use, and the structure of the data it is allowed to interpret.

Example: When an employee asks "How many vacation days do I get?", Personio Assistant already knows their region, team, role, and office — so the answer is specific to them, rather than a generic policy overview.

3: Trust is a requirement, and it can change how fast you ship

Building AI quickly matters because learning quickly matters, and we want to help our customers make the most of this technology. We want short feedback loops, earlier validation, and faster iteration.

But for us, maintaining the trust of our customers is non-negotiable. There is never a moment where shipping sooner outweighs a full security review, clear data handling, or confidence in how a feature behaves in sensitive workflows.

That constraint forces better building discipline. We find other ways to move fast, including faster prototyping, faster internal iteration, and tighter testing loops, while keeping trust requirements fixed.

4: Privacy and data boundaries shape the product

HR data is sensitive, and European expectations around privacy are rightly high. For us, that means being explicit about data boundaries, including training discipline: we do not train AI models on customer data. We train on synthetic data and publicly available data.

It also means designing features where access rights, permissions, and role-based visibility are treated as first-class product requirements, because they are the basis of trust in HR systems.

Example: Access rights and permissions are always honoured through all of Personio’s AI features:

An employee can’t ask questions around headcount, data, or compensation if they don't have permission to see that data.

Managers cannot ask questions about another team’s time off or salary.

Nobody can access third-party integration pages they aren’t already provisioned for.

5: Reliability and testability matter more than cleverness

AI features need to behave in ways you can test, validate, and explain. In HR, outputs that sound confident can create real-world harm if they’re based on thin context or hard-to-reproduce logic.

This is why we design AI with:

Clear boundaries around what it can do

Visibility into what it used

Review and confirm steps

An audit-friendly approach to accountability

Example: When you connect Personio Assistant to a third-party integration like Confluence, it will always cite the original source page the response came from. This not only lets you dig in further, but also validates the response.

What this looks like in practice: Assist, don’t decide

For us, one of the clearest patterns in HR AI is “assist, don’t decide”. The system raises a hand, flags something that does not look right, drafts a starting point, and keeps the human as the accountable decision-maker.

It’s an unflashy philosophy. It also matches the reality of HR work, where the goal is defensible decisions, consistent processes, and trust that compounds over time.

See purposeful AI in day-to-day HR work

If you want to start using AI in your day-to-day HR workflows, I’d recommend starting with employee and admin questions, the high-volume requests that create constant interruption for your team.

How many hours have you lost answering routine employee questions, when the right answer already exists somewhere in a policy doc, a shared drive, or an internal wiki? Personio Assistant is designed to help here, with the context around your organisation and your employees, plus the third-party knowledge sources you choose to connect.

Your employees get immediate answers, and HR teams get time back for the work that depends on judgment, context, and human accountability.

Learn more about Personio Assistant.

Alex Bannon

Alex leads Personio’s AI, machine learning, and data efforts for customer-facing launches. He works at the intersection of engineering, product, and trust, focusing on AI that helps HR teams move faster on the work that slows them down, while keeping people firmly accountable for the decisions that matter.