8. May 2026

AI for HR: Where it helps, where it doesn’t, and how to decide

A practical framework for HR and AI decision-making

AI is showing up everywhere in HR software right now. Some of it is genuinely useful. Some of it is theatre. And some of it creates risk in places where you cannot afford ambiguity.

Our view at Personio is simple: the best AI in HR is purposeful. It’s designed around real HR challenges, grounded in the data and permissions you already rely on, and built with clear boundaries so humans stay accountable for decisions that affect people’s lives.

We spend a lot of time speaking with HR leaders about their expectations for AI at work. We hear the same themes repeatedly: less admin, fewer preventable errors, and more confidence in the numbers they share with leadership. We also hear a consistent question: where should AI stay out of HR processes?

This guide gives you a practical way to decide:

Where AI can safely remove friction and save time

Where it can lead to harmful outcomes for employees or decisions you can’t clearly explain

What to ask vendors so you can defend your choices internally

Personio’s POV: AI should be used where it improves the quality and consistency of HR work, with clear boundaries, reliable inputs, and a human decision checkpoint.

Where AI helps in HR

The best early wins tend to be unglamorous HR AI use cases. They sit in workflows that are frequent, time-consuming, and easy to validate.

Across our conversations with HR leaders, the most durable value tends to cluster into three patterns:

1. Drafting, structuring, and summarising

AI can be useful when it helps people get from blank page to first draft, or from long text to a clear structure, as long as a human remains accountable.

This applies to things like:

Drafting manager feedback based on provided inputs

Summarising themes from a set of open-text survey comments

Turning meetings into a structured action plan

Rewriting content for clarity and tone

“People talk a lot about the AI layer, but what really determines whether it helps is what you feed it. Good inputs make AI useful. Weak inputs make it unpredictable.“

Camille Merritt

Product Manager, Personio

2. Data checks and validation

HR teams can spend an eyewatering amount of time fixing issues that should have been caught earlier: missing fields, inconsistent records, mismatched attributes, and errors that only surface when Payroll, Finance, or an employee flags them.

Purposeful AI can help by:

Flagging anomalies

Prompting for missing information

Checking consistency against rules you define

Surfacing “this doesn’t look right” moments early

Example scenario:

A London-based employee is entered with a salary in euros instead of pounds. The error isn’t obvious until Finance flags it. A validation layer that checks “workplace currency vs. salary currency” catches it early.

This is one of the clearest “assist, don’t decide” use cases: the system can raise a hand, but a human confirms what to do next.

3. Explainability in reporting

AI can be helpful in analytics when it does more than generate a chart. The real value is explainability: helping HR leaders understand what a metric includes, how it’s calculated, and why it changed.

That’s the difference between interesting dashboards and decision-grade reporting.

Where AI doesn’t help, or shouldn’t lead

Some HR workflows should feel fast. Others should feel deliberate. Here are some of the sensitive situations where humans need to maintain complete oversight.

Sensitive decisions that affect pay, opportunity, or employment status

If AI is scoring, ranking, or recommending outcomes in ways that are hard to interrogate, you’re in a high-risk zone.

This includes decisions like:

Compensation changes

Promotions

Terminations

Performance outcomes

Candidate rejection decisions

Purposeful AI can still support these workflows, but it should be constrained to tasks like drafting, consistency checks, and surfacing missing context, with explicit human checkpoints.

“In some HR questions, friction is a feature, not a bug. If AI makes it effortless to dig into sensitive patterns about colleagues, that’s a sign we should pause and rethink.“

Lisa Jones

Product Manager, Personio

Hands-off AI with unclear accountability

Hands-off AI can look efficient, but it creates risk when it produces outputs that feel final in workflows where a human is still accountable.

If you can’t answer these questions clearly, pause:

Who is accountable for the output?

What happens when it’s wrong?

Can we reproduce the result and test it?

Can we override it, and is that override visible?

The trade-off is straightforward: the more sensitive the outcome, the more you should optimise for control, traceability, and a clear decision checkpoint, even if it costs some speed. If a tool can’t show what it used, what it assumed, and where you’re meant to step in, you’re left with a result you can’t confidently defend.

“The red flag for HR is when AI is doing everything for you as opposed to being a thought partner. The minute that HR teams are letting the AI choose the applicant for them, make the hire, write the performance review, you’ve given up that human interaction and you’re losing control of the overall plot.“

Camille Merritt

Product Manager, Personio

The purposeful AI decision framework

When you’re evaluating an AI use case, or an AI vendor, you can usually get to a defensible decision by asking three questions.

1) Inputs: What does it rely on?

AI quality is constrained by input quality. In HR, that means both structured system data and contextual knowledge like policies.

Ask:

What data does it use, and what data does it not use?

Can we control which sources it can access?

Is the data structured, current, and permissioned correctly?

How does it respect permissions and access rights?

Is my data being used to train the model?

“A red flag is not knowing where your data is stored and processed. Another is when a tool has too little context: it will still give you an answer, but it may be based on very little.“

Lisa Jones

Product Manager, Personio

2) Outputs: How predictable and testable is it?

In HR, you want outputs that are:

Predictable (similar inputs lead to similar outputs)

Testable (you can validate accuracy and failure modes)

Transparent (you can explain what it did, at least at a practical level)

Bounded (it doesn’t “get creative” in sensitive contexts)

These are essential criteria for HR AI tooling.

3) Human checkpoint: where does accountability sit?

This is the most important question. Purposeful AI makes it obvious who reviews, who approves, and who owns the decision.

In some areas of HR, fast is not the goal. Defensible is. That means no silent automation of outcomes, no opaque scoring that can’t be explained, and no set-and-forget decision-making in high-stakes contexts.

How AI should (and shouldn’t) show up across common HR workflows

HR workflow | Where AI can help | Where AI shouldn't help |

|---|---|---|

Employee questions and self-service | Drafting answers, summarising policy content, routing people to the right place | Making binding policy interpretations in grey areas without human review |

Performance and development | Drafting feedback from defined inputs, consistency checks against frameworks, helping managers avoid recency bias | Deciding outcomes, ratings, promotions, or compensation changes |

Surveys and sentiment | Summarising themes, surfacing patterns to investigate, helping HR prioritise follow-ups | Treating sentiment as truth without context, or triggering automated actions without interpretation |

Core HR data management | Flagging missing fields, anomalies, and risky inconsistencies, prompting clean-up | Auto-changing sensitive records without approval or auditability |

Recruiting | Drafting job descriptions, speeding up admin steps, summarising interview feedback after decisions | Rejecting candidates, ranking candidates as a final decision-maker, or hiding rationale |

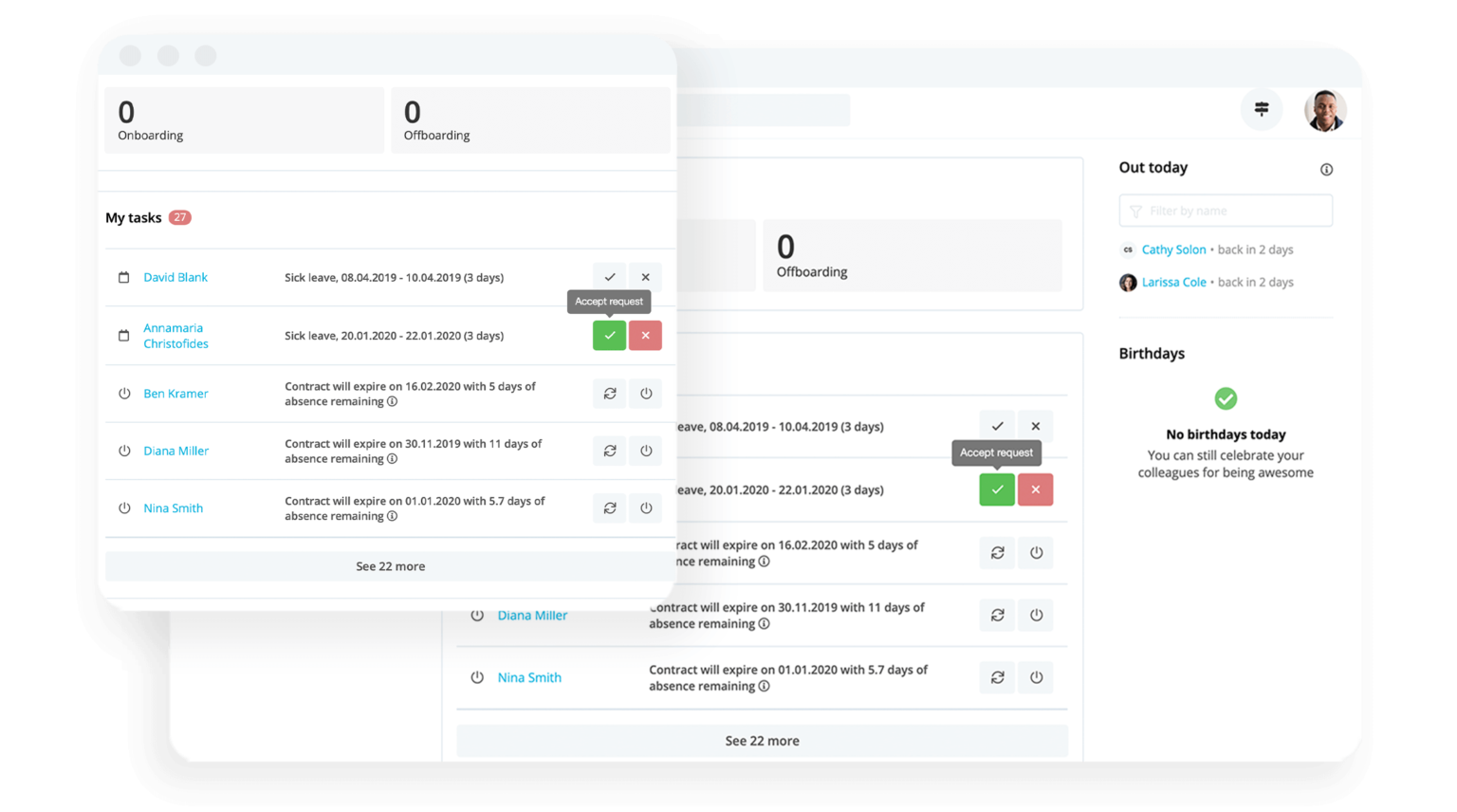

Personio Assistant

If you’re looking for a concrete example of purposeful AI in day-to-day HR work, start with employee and admin questions, the high-volume requests that create constant interruption.

How many hours have you wasted on answering routine employee questions, whose answers likely live somewhere in Slack, Google Drive, or another company-wide resource?

Personio Assistant has the context around your organisation and your employees, and can immediately answer these questions. It can even integrate with third-party knowledge sources like Confluence, giving it even more information hyper-relevant to your unique organisation. Your employees get immediate answers, and you don’t have to roll your eyes at yet another ticket about the UK holiday calendar.

Discover Personio AssistantWhat to watch out for when evaluating HR AI tools

You don’t need to be an AI expert to spot risky patterns. A few questions will usually surface whether a tool is designed for trust, or designed for a demo.

1. Unclear data handling

If a vendor can’t clearly tell you whether your inputs are used to improve their model, be cautious.

Ask vendors directly:

Do you train models on customer inputs by default?

What is stored, for how long, and where?

What controls do we have?

“A major red flag is any HR system that uses your data to train its models. At Personio, we don’t train on customer data.“

Alex Bannon

Engineering Manager, Personio

2. Confident outputs with thin context

If the AI tool can’t show what it relied on, or it’s operating with too little context, it can still sound convincing. That’s exactly the risk. You won’t know whether it’s grounded in your policies, your data, or a generic best guess.

Ask vendors directly:

What sources does the model use to answer, and can we control them?

How do you handle permissions, access rights, and role-based visibility?

Can we see what resources the system used to produce this output?

“AI can’t be a simple ‘on/off’ toggle. AI tools need to be explicit about what the system has access to, how data is processed, and what isn’t shared with third parties, so customers can use AI confidently in day-to-day work.“

Lisa Jones

Product Manager, Personio

3. Hands-off automation in sensitive workflows

If the pitch is “set it up once and the AI handles it,” ask where the human checkpoint is.

Ask vendors directly:

Which steps require review and confirmation?

Is this something AI should actually do?

What audit trail exists, and can we export it?

“There’s a perception that AI will replace all the work in HR, like a magic bullet. In reality, it’s a tool to help, not to replace.“

Camille Merritt

Product Manager, Personio

4. Promises of perfect accuracy

In HR, you need systems designed for review, correction, and accountability, not systems that promise perfection.

Ask vendors directly:

How do you handle errors, disputes, and corrections?

What failure modes have you tested for, and how do you monitor them?

What does the user see when the system is uncertain?

“LLMs are susceptible to hallucination. There is no 100% accuracy. That is a myth. Especially with sensitive data like salaries or terminations, you need a human checkpoint, rather than expecting AI to be 100% perfect.“

Lisa Jones

Product Manager, Personio

Monday-morning actions: How to start with AI without creating risk

If you’re under pressure to “do something with AI,” you can still move quickly without gambling with trust.

Pick one low-risk workflow to improve, such as employee Q&A, drafting, data checks, or reporting explainability.

Define the human checkpoint in writing: who reviews, who approves, and what good looks like.

Write a short boundary list for your organisation, framed as current guardrails, not permanent bans.

Pressure-test inputs: what data does the workflow require, and is it reliable today.

Decide how you’ll measure success: eg. time saved, fewer errors, better consistency, faster cycle completion, or fewer escalations.

Ready to explore purposeful AI in Personio?

If you want to see what purposeful AI looks like when it’s built into real HR workflows, with clear boundaries, reliable inputs, and human decision checkpoints, talk to our team.

Book your free demoFAQs

1) What are the safest AI use cases in HR?

The safest use cases are typically high-volume workflows where outputs are easy to review and validate. Examples include drafting and structuring content, summarising themes, routing employee questions, and flagging data inconsistencies for human review.

2) Where should AI not be used in HR?

Avoid hands-off AI in decisions that affect pay, opportunity, or employment status. If AI is making or effectively driving outcomes in performance ratings, promotions, compensation, terminations, or candidate rejection decisions, the risk profile changes dramatically.

3) How do I evaluate an HR AI vendor quickly?

Use three questions:

What inputs does it rely on, and can you control access to them?

Are outputs predictable and testable, with clear boundaries?

Where is the human checkpoint, and who is accountable?

If a vendor can’t answer those clearly, that’s a decision in itself.

4) What’s a red flag when it comes to data privacy?

A major red flag is unclear data handling, especially if you can’t get a straight answer on whether customer inputs are used to train models, what is stored, and where processing happens. Use the vendor’s security documentation as a baseline, and push for specifics.

5) How do I start using AI in HR without creating risk?

Start with one low-risk workflow, define the human checkpoint, and write down your current boundaries. Then measure impact and expand only when you can explain, test, and govern the workflow end to end.